It’s common for companies and marketers to think of user onboarding as an afterthought – something that needs to be added on after the product has been designed and is ready to go. Unfortunately, this means that nowhere near enough of us put thought into what results we want from user onboarding — and how we’ll measure success.

This guide contains some practical advice for how to go about defining a user onboarding measurement strategy, from outlining goals to getting into the nitty-gritty of measurement methodology. If you have any feedback or thoughts, hit us up at @nickelledapp on Twitter or via hello@nickelled.com.

The Value Journey for User Onboarding

Before we get into this, let’s agree – user onboarding is defined as the process of “improving a person’s success with a product or service”. That’s the Wikipedia definition, and it’s one that we subscribe to here at Nickelled.

The key to a strong onboarding measurement strategy then, is measuring success with your product or service. And specifically, measuring it for new users. If you’re wondering how to define success, it can be done in different ways, but we recommend using one question above all others:

“How long does it take for a first-time user to extract value from my product/service?”

This definition is our favorite because it’s built on the concept of value creation – the idea that you’ve created something that your customers want to use because it meets their important needs better than competing products. Value creation is the best way to get a customer to start paying you or to keep paying you, which is why this definition works better than arbitrarily defining business goals as a primary measurement.

How do we define value extraction?

Different businesses will do this in different ways. At Nickelled, we know that a customer has extracted value from our service when they have created a guide for the website AND shared it with their intended audience – until it’s been used by that intended audience, we haven’t actually added any value to the business. So our value journey is all about getting people to the point where they’ve shared their guide.

Other businesses look at this in different ways. Facebook in its early days famously defined an ‘engaged user’ as one who had reached seven friends within ten days of signing up – most likely because at seven friends, a user was likely to have found a connection which they were interested in. Dropbox tries to persuade users to put one file on one folder on one device and Twitter aims to get users to follow over 30 people, thereby having a rich enough feed to derive value from it.

How do we measure our value journey?

Every business that cares about user onboarding should be aware of two metrics related to the value journey:

- What percentage of our users extract value at all?

- How long does it take them to get there?

Obviously, you need to be aiming high for the first number, and low for the second. If you have an app in which only 50% of your signups ever extract any value, then you have a problem – either with marketing or with product. Similarly, if it takes a long time for those who do extract value to reach that point, you’re likely to experience high churn rates as users will simply give up.

There are, of course, a couple of caveats here. It’s not always clear when a user is extracting value, as you may offer different types of value – you may need to extensively speak to customers and potential customers to understand this. If it helps, you may find it easier to define minimum and maximum value – at what point will a customer start paying you (minimum value) and at what point will they be so happy that they’ll want to pay you more or tell all their friends about you (maximum value).

Secondly, value may morph with time – some apps have a compounding effect, becoming more valuable the longer you use them and the more you engage with them. In scenarios like this, the best strategy is normally to keep things simple – pick a top-of-funnel metric for minimum value (in a long funnel, there are simply too many variables) and stick to it.

Friction on the value journey

Armed with our value journey numbers, creating an onboarding measurement strategy becomes a lot easier. What we need to understand now is the friction that stops users from reaching the value extraction point.

Plotting your funnel, you’ll likely find that friction occurs in many forms. Funnels are usually a set of steps which will take a user from signup to the value extraction point – for a social network, for instance, the funnel could comprise of adding a profile picture, updating a biography, inviting some friends and then sending a message. At Nickelled, we call these Key Activation Events, and they should be a secondary measure in any user onboarding measurement strategy.

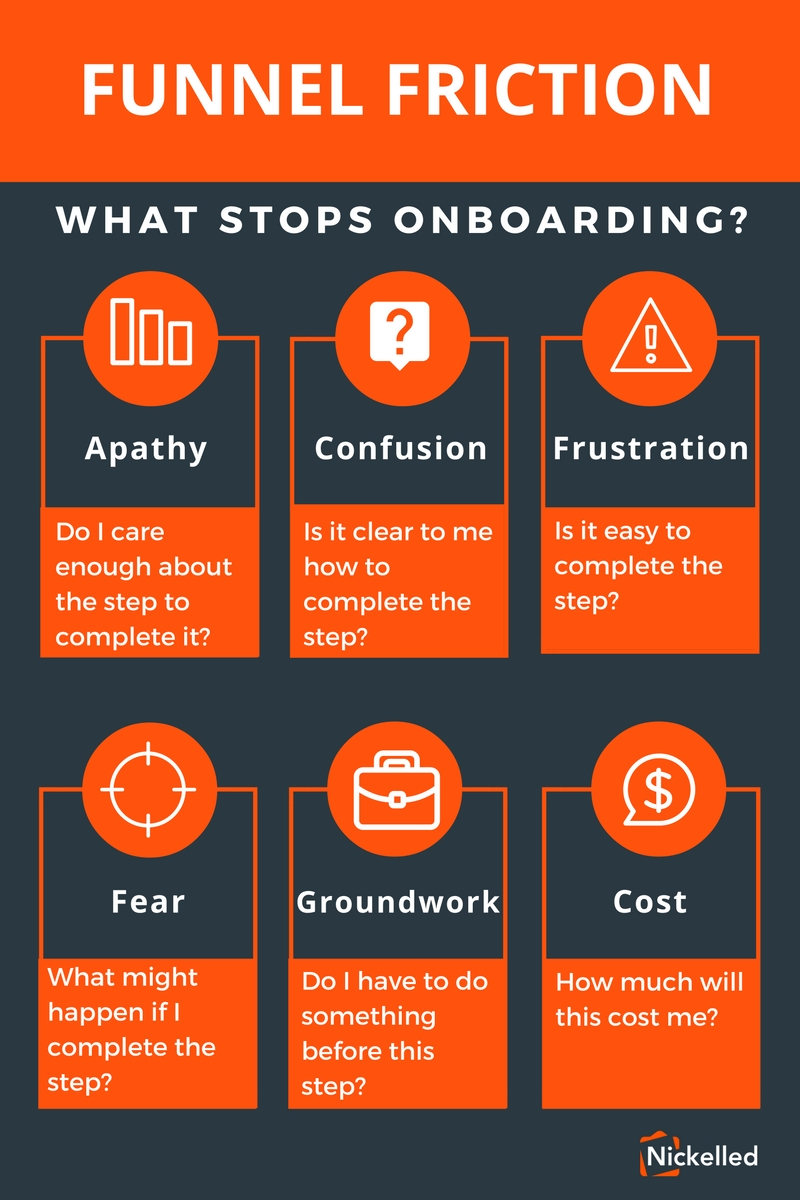

What can stop users progressing from one stage to another? Actually a wide variety of things (some examples below).

- Apathy – do the users care enough about the step to complete it?

- Confusion – is it clear how to complete the step?

- Frustration – is it easy to complete the step?

- Fear – are they questioning what might happen if they complete the step?

- Groundwork – do they need to do something else before they can complete the step?

- Cost – is it too expensive (either in money or in time) to complete the step?

Identifying these forms of funnel friction should inform your user onboarding strategy – it will determine whether or not the problem is best solved by design, by customer education or by incentivization. From there, tracking KPIs becomes much easier.

Let’s take an example of confusion – for whatever reason, users reach a stage in the funnel where the funnel progression falls off suddenly, and after speaking to a couple of users, you’ve realised that there’s a problem with the design which will take some time to fix. In the meantime, your focus has to be on getting users past this ‘bump’ and toward the value extraction point of your app.

As this is a customer education problem in the short term (and a design problem in the long term), you decide that an on-page guide (such as the type we make) is the best way to solve the problem. Now, you have something to measure – does the funnel completion rate pick up with the guide in place?

A note on technical stuff:

We recommend Amplitude, MixPanel or Google Analytics for measuring these types of funnel. Amplitude in particular makes it easy to visualize a funnel and to monitor changes in the completion rate for each step. If you’re trialling a design or content change using A-B testing, the simplest way that we’ve found to measure is via Optimizely or VWO.

Arguably the simplest way to set up user action tracking when you have multiple destinations is to use a tool like Segment, which allows you to set up individual user actions to be tracked and ‘pipe’ those into whichever apps you want very easily (at Nickelled, our Segment data flows into Intercom, Google Analytics, Amplitude and Drip). However, you can also set up user actions very easily using Google Tag Manager, or by using the javascript most tracking apps provide themselves.

Measuring the success of tactics like this, which are designed to increase the funnel completion rate toward the value extraction point, is an important aspect of user onboarding measurement. We track these things in a company dashboard, which details both the value journey metrics, and the efforts we’re making to improve them.

Don’t forget the long term

Normally, we see that companies which are doing user onboarding measurement tend to be doing it over a span of hours, rather than days. This is how things should be – remember, our primary objective here is to move customers to the value extraction point as soon as possible, to reduce the chances of them dropping out of the funnel altogether.

However, we recommend using cohort analysis to ensure your business has a long-term view of the changes that you are making. This is important because a change to the user onboarding flow may have an unintended consequence further down the line. We track these changes by creating behavioural cohorts and examining how their engagement changes.

As an example, consider the case of a music app which is trying to improve onboarding. As part of their experiments, the team adds a new step where users must follow an artist, which increases the percentage of people reaching the value extraction point (say, playing a song) considerably. However, on closer examination, it may transpire that following an artist decreases retention after one month, with those who have followed an artist less likely to return. Eventually, the team diagnose that the algorithmic music recommendations are more effective without followed artists – but without the behavioural cohort analysis, they may never have come to this conclusion.

There are a number of tools that can be used to track cohorts, including Amplitude (though only the premium version, sadly) and Google Analytics.

In summary, we recommend structure a user onboarding measurement strategy around three things:

- Our value journey is the basis for everything we do when studying our user onboarding measurements

- In trying to improve it, we track key KPIs around reducing friction

- Over the long term we look to understand the effects our changes have on retention

If you’ve had success tracking user onboarding measurement differently, we’d love to hear from you! You can reach us @nickelledapp or via email at hello@nickelled.com.

In addition, if you’d like more great posts on customer success and why it matters, sign up to the Nickelled newsletter (below). And finally, if you’re anxious to improve your onboarding experience without deploying a single line of code, you can check out our guide to buying user onboarding software.